In performance marketing, most Meta ad accounts for Thailand e-commerce brands don't lose because the budget is too small. They lose because the team does creative testing like a slot machine, with too many switches pulled at once.

A strong meta ads testing framework fixes that. For Thailand e-commerce brands, it helps you learn faster, spend with more control, and spot winners before fatigue sets in. That matters in beauty, fashion, supplements, home and living, and FMCG, where the feed moves fast and buyers make snap calls.

In 2026, the Meta algorithm, especially in Advantage+ Shopping Campaigns, does more of the heavy lifting. Targeting is broader, placements are more automated, and creative does more of the sorting. In other words, your ad is no longer just the message; it's also the filter.

That shift is even sharper in Thailand. Buyers are mobile-first, social-commerce habits are strong, and content gets judged in seconds. A serum demo, a try-on clip, or a bundle offer can win fast, but only if the visual hooks land.

Recent 2026 analyses, such as RedClaw's Meta ads creative testing framework, point to the same pattern: fresh concepts beat endless micro-edits. Meta also appears to reward creative diversity more than tiny headline changes, boosting CTR and lowering CPA. So, swapping one adjective in tired ads suffering from creative fatigue won't save performance.

If you're adapting global assets for the local market, strong social-first content strategies in Thailand matter because humor, proof, price framing, and creator style all shift by market.

When targeting gets broader, creative becomes the sharpest tool left in the box.

One more 2026 wrinkle matters. Meta's GEM-style delivery can spread influence across touchpoints. That means one ad may assist another. So, don't judge every creative by isolated CPA alone. Watch the full campaign and account trend, then decide.

A good framework should feel plain. That's the point. It removes drama and makes learning repeatable.

For small to mid-sized budgets, keep setup simple. Organize your campaign structure around ABO (Ad Set Budget Optimization) with one prospecting test campaign per product cluster, not per ad idea. Reserve CBO (Campaign Budget Optimization) for scaling winning ads. For example, group acne serum, collagen gummies, or sofa covers into separate test lanes. Keep audience, placement, landing page, and offer rules stable inside each ad set.

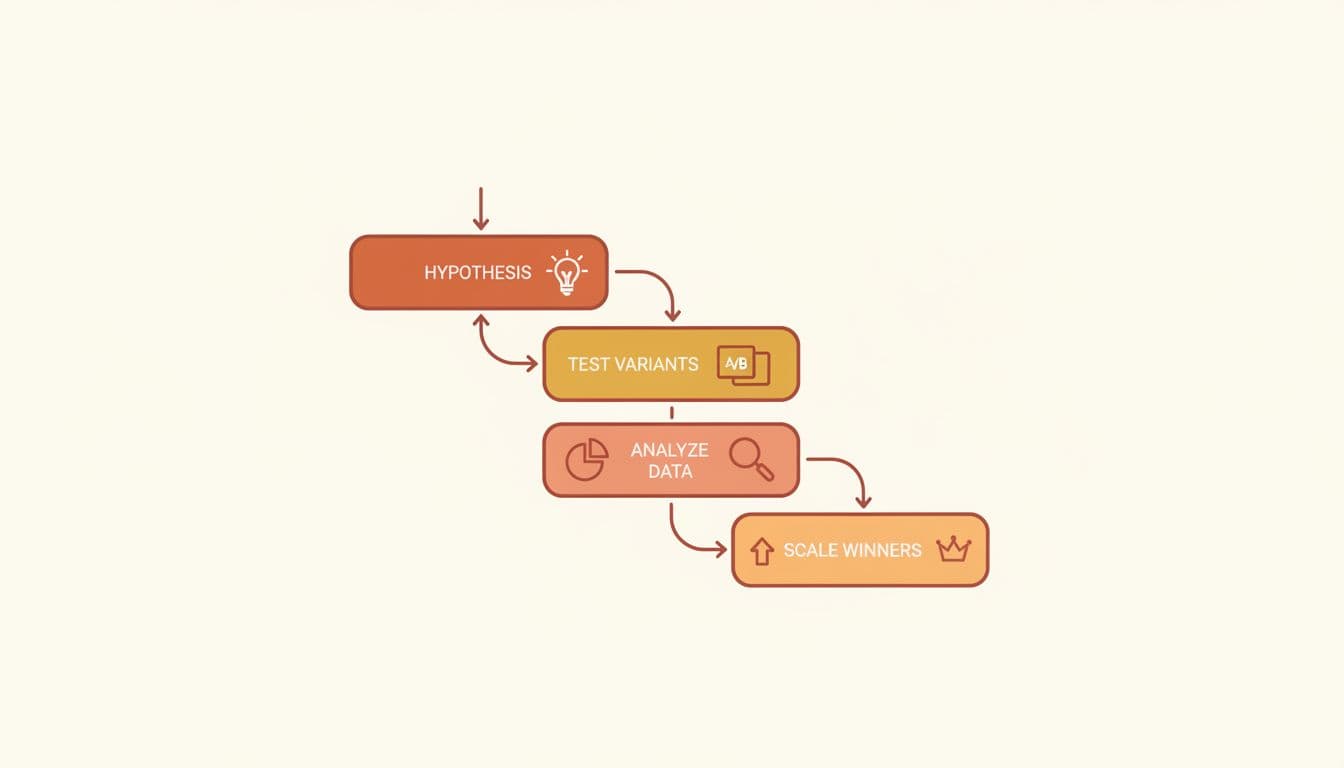

Then run this four-step loop for creative testing:

TH_BEAUTY_PROS_Hook-Problem_Format-UGC_Offer-Bundle_V1.A realistic cadence is 2 to 4 new concepts per week. That's enough to keep the account fresh without flooding delivery. AdStellar's 2026 testing methods make a similar case for steady creative testing over random bursts.

Decision rules matter because false positives waste money. Don't crown a winner after a few hours. Don't test a new audience and a new ad at the same time. Don't compare weekday data against weekend data as if they mean the same thing. Wait for statistical significance and completion of the learning phase to avoid rushed calls.

A practical rule is this: let each variant gather enough spend to approach about 1.5 times your target CPA, or enough impressions to show stable click and hold patterns against baseline metrics. If CTR rises but conversion rate or CPA worsen, that ad hasn't won. It only looked good from far away.

Start with the big rocks in creative testing. Creative angles like hook, format, and offer, along with ad variations, usually move results more than button text or tiny copy edits.

This table keeps priorities clear:

| Creative Angles | Ad Variations | Thailand e-commerce example |

|---|---|---|

| Hook | Problem-first vs result-first | Beauty serum, irritated skin first vs clear-skin result first |

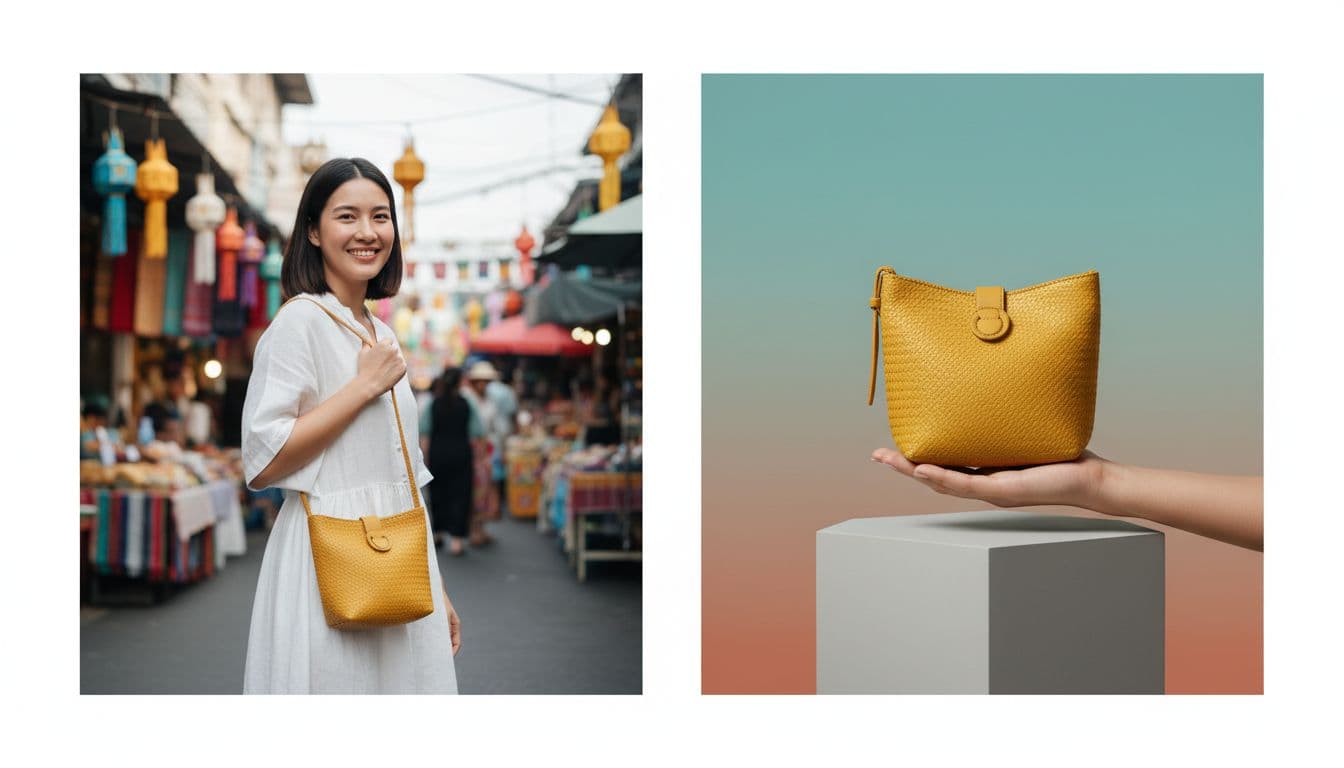

| Format | UGC selfie video vs static images | Fashion try-on clip vs polished product showcase |

| Offer | Discount vs free shipping vs bundle | Supplements, 15% off vs buy 2 get 1 |

| Thumbnail | Face close-up vs product-in-use | Home storage, clutter-before shot vs neat shelf shot |

| Copy | Short punch line vs proof-led copy | FMCG snack, taste-first line vs review-led line |

UGC often wins cold traffic because it feels like a friend's tip. That works well for beauty, supplements, and everyday household products. On the other hand, static images can win when the item needs polish or detail, such as premium fashion, gift sets, or design-led home goods.

In creative testing, use the 60-30-10 rule for budget allocation: 60% to scaling winning ads, 30% to winner variations, and 10% to new tests.

Thumbnails deserve more attention than most teams give them. These visual hooks pack thumb-stopping power. On Meta, the opening frame is your shop window. If it doesn't stop the thumb, the rest of the ad doesn't get a chance.

Ad copy should come after the bigger tests. Keep the same visual, then test winner variations on one copy angle at a time. For example, change only the first line, or only the proof point, while keeping headline and CTA steady. Watch engagement signals alongside primary conversions.

The trap is obvious but common: changing hook, offer, and format in one go.

If the winner changed three things at once, you found a better ad, but you didn't find the reason.

A smart meta ads testing framework doesn't need a giant budget. It needs clean inputs, steady cadence, and clear rules.

Pick one product line, one audience, and one variable (such as creative testing) this week. Protect ROAS by avoiding auction overlap and audience cannibalization when scaling winning ads. Then keep testing like a lab, not a lottery. The Thailand brands that learn faster usually scale faster, too.